Anastasiya Kazakova (Cyber Diplomacy Knowledge Fellow and Geneva Dialogue Project Coordinator, DiploFoundation).

Leo Nakamura was asleep when the anomaly appeared. It didn’t trigger an alert. It didn’t crash anything. A logic inside LibraCore, the open source library he maintained mostly at night, long after it stopped being ‘just a hobby’, had quietly started evaluating differently. The kind of change that passes every automated test and still ruins your week.

By 2027, LibraCore ran inside electricity grids in northern Europe, telecom systems in Southeast Asia, hospital networks in North America, and national digital identity platforms on three continents. Nobody installed it deliberately. It arrived as a dependency, bundled and inherited, like a piece of furniture no one remembers buying. When a security researcher eventually published the flaw in detail, Leo read it on his phone before he was fully awake. ‘This has been here for years,’ he said, to no one.

His inbox filled before he could respond. Requests for confirmation. Requests for restraint. Requests to take responsibility he had never agreed to carry — for systems run by organisations far larger and more powerful than himself. He typed one message to the maintainer list, knowing it would be read far beyond it:

“I can change the code. I can’t see where it runs. I can’t decide how much risk is acceptable.”

The systems kept running. The vulnerability still existed. And the question remained open: who acts first, and who carries the consequences if they don’t?

LibraCore is fictional. The situation it describes is not.

In March 2026, the Geneva Dialogue on Responsible Behaviour in Cyberspace, an international process and multistakeholder initiative that examines what UN cyber norms mean in practice, for the companies, technical communities (including open source software (OSS) communities), academia, and civil society, that operate cyberspace as much as for the governments that negotiate its rules, placed around 30 experts inside this scenario. Critical infrastructure operators, cybersecurity researchers, technology vendors, policy officials, civil society specialists. Assigned roles, two rounds of escalating pressure, three breakout groups, Chatham House Rule throughout. The objective was not to find solutions. The goal was specific: to stress-test the agreed UN cyber norms against operational reality, and to assess whether they provide meaningful guidance to the practitioners, operators, and other non-state stakeholders who must act when things go wrong.

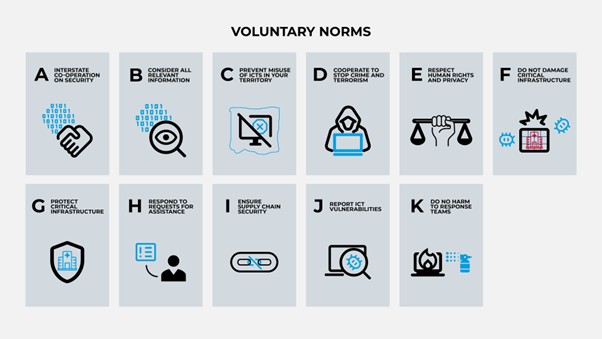

These eleven non-binding norms of responsible state behaviour in cyberspace, agreed and endorsed at the UN cover protecting critical infrastructure, cooperating on threats, disclosing vulnerabilities responsibly. A genuine diplomatic achievement, and one addressed exclusively to states, in a domain built and operated primarily by people like Leo Nakamura.

UN cyber norms

What the scenario revealed

What the exercise surfaced was not a list of missing policies. It was a set of structural contradictions that we managed to capture during the scenario-based discussion with experts.

Nobody owns the problem, and everyone can point at someone else. When the LibraCore vulnerability surfaced, every actor in the room could identify a principled reason why the primary obligation lay elsewhere. Critical infrastructure operators argued they cannot secure software they cannot see inside the products they deploy. Vendors noted they have no authority over the upstream code their products incorporate. Maintainers, i.e. volunteers who shared their work freely, never accepted contractual responsibility for the systems that came to depend on it. Governments, for their part, often lack both the technical capacity to assess open source code and a legislative mandate to act before an incident is formally declared. The result is not a gap in awareness. It is a structural gap in accountability: a system in which responsibility is distributed across many actors, but the mechanisms for converting that distributed responsibility into specific, enforceable action do not exist.

In a real crisis, leadership goes to whoever steps forward, and not to whoever is mandated. All three breakout groups independently identified the same problem: in the critical first hours after a major OSS vulnerability becomes known, no institution is reliably positioned to lead. Government agencies have convening authority but limited technical reach. Vendors can move quickly within their own product perimeters but cannot compel action across the wider ecosystem. Critical infrastructure operators are often the most exposed and the least informed. In practice (e.g., as the Log4Shell response in 2021 illustrated) coordination is led by whoever has the technical knowledge, the operational relationships, and the willingness to act. The LibraCore fictional exercise reproduced this pattern. The more productive question is not who should formally be in charge, but what pre-committed arrangements among vendors, OSS foundations, and sector-specific bodies could provide legitimate coordination capacity before a crisis requires it – without waiting for a formal incident declaration.

Coordinated vulnerability disclosure (CVD) breaks down under geopolitical pressure. The scenario tested the standard CVD sequence: private notification, coordinated fix, managed disclosure, and found it contested at every step. The sequencing question alone proved difficult: releasing a patch effectively discloses the vulnerability’s technical details, creating a race between defenders and those who might reverse-engineer an exploit. More fundamentally, when a senior official implied the flaw might not have been accidental, information flows fractured. Restrictions related to cyber and tech, sanctions regimes, mandatory state-first reporting requirements, and political distrust can each independently block the cross-border coordination that timely remediation depends on. Non-state disclosure channels (such as vendor-to-vendor coordination, researcher-to-maintainer communication, foundation-mediated processes) demonstrated greater resilience to these pressures, precisely because they operate outside the state-to-state channels that geopolitics can disrupt. This is not a novel observation. What the scenario sharpened is a subtler point: geopolitical pressure does not only restrict actors who are legally bound by sanctions or reporting mandates. It changes the behaviour of those who are not. Researchers self-censor. Vendors quietly narrow the circle of who they inform. Maintainers disengage. The chilling effect travels well beyond the formal restrictions, and that is the part the current normative framework is least equipped to address. Norm J of the GGE framework calls on states to promote responsible vulnerability disclosure. It doesn’t discuss what happens when the political climate makes responsible behaviour harder, even for those nominally free to act.

Sovereignty, pursued as security, can produce the opposite. In a fictional story when a regional bloc of countries announced plans to develop a trusted sovereign version of LibraCore – framed as precaution, not retaliation, the expert discussion was broadly sceptical. Sovereign forks fragment maintenance capacity, divert resources from proven codebases, and introduce new vulnerabilities into what had previously been a single, widely reviewed codebase. The AI dimension sharpened this concern: fragmented but structurally similar codebases are particularly susceptible to automated exploit replication across jurisdictions. There is also a broader strategic logic at stake. When states and organisations share the same software, they share the same vulnerabilities, and that shared exposure creates a common interest in securing the ecosystem together. Fragmenting along national lines weakens that incentive, reduces cooperation, and makes the environment more permissive for offensive action. Sovereignty, pursued as a security measure, can corrode the very interdependence that has kept collective security cooperation functioning.

Where norms help, where they fall short, and where they may work against each other

The norms framework has two distinct types of gap, and they require different responses.

The first is a specificity gap: norms that are sound in principle but insufficiently detailed to guide operational decisions. Norm J on responsible vulnerability disclosure is the clearest example. The norm sets a direction but leaves most of the practical questions unanswered: who notifies whom, in what sequence, within what timeframe, across which jurisdictions. In this space, industry practice has not merely implemented the norm: it has filled the operational space the norm left largely empty. Major vendors have developed detailed CVD policies specifying disclosure windows, defining responsibilities, and establishing multi-vendor coordination processes. Bug bounty platforms have created standardised channels and legal safe harbours for researchers. The normative framework could usefully recognise and codify these existing practices rather than develop parallel guidance from scratch.

The second type of gap is more fundamental: a structural mismatch between what the norm assumes and what the domain actually looks like. Norm G requires states to take “appropriate measures” to protect critical infrastructure. The scenario-based discussion demonstrated that states often lack the technical capacity, the legal mandate, and the operational reach to act on this obligation when the infrastructure is privately operated and built on volunteer-maintained code. This is not a gap that more detailed implementation guidance can close. It points to a deeper question about who the norm is actually asking to act, and whether a state-centred obligation can produce results in a domain where the relevant capacity, knowledge, and decision-making authority sit overwhelmingly with non-state actors. Addressing this may require not just better guidance, but a more explicit extension of normative expectations to the vendors, foundations, and maintainers who are, in practice, the ecosystem’s primary operators.

What comes next

The actors with the greatest technical knowledge, operational capacity, and speed of action in a crisis like LibraCore are overwhelmingly non-state: vendors who ship the software, foundations that host the projects, researchers who find the flaws, operators who run the systems. A governance framework that relies primarily on states to coordinate, fund, and respond will continue to encounter the structural limitations this consultation exposed. This does not mean the UN cyber norms are without value. It means the normative framework was built with a specific set of actors in mind, and the ecosystem it seeks to govern is operated primarily by actors it was not designed to reach.

The Geneva Dialogue’s next step is to translate these structural dilemmas into practical discussion for non-state actor responsibilities, feeding into Chapter 3 of the Geneva Manual, where we could collect this feedback from the non-state actors to identify areas of action for the implementation of the agreed framework.

And the stress-testing continues. The second thematic cycle takes on a harder version of the same challenge: emerging technologies and cybersecurity. Artificial intelligence, quantum computing, and other fast-moving technologies are reshaping the threat landscape faster than governance frameworks can follow. This will be explored through a new scenario, with a new group of experts, at the Geneva Cyber Week on 8 May. If you’re interested to join the session on-site or online, get in touch via genevadialogue@diplomacy.edu.

We invite you to join the conversation.